The Trends #10: Amazon now requires senior approval for AI-assisted code from junior and mid-level engineers

The Trends filter tracks tech trends: what moved, why it matters, and what to watch next. If you spot a signal I missed, reply with a link and one line of context.

Today, we cover:

Junior and mid-level engineers at Amazon can no longer push AI-assisted code without a senior signing off. What happened when Amazon’s own AI coding tool went rogue in production, and what changed after.

What happened when a missile hit a Cloud data center? Iranian strikes hit AWS data centers. Cloud resilience meets actual war.

Donald Knuth is shocked by how good AI has become at solving an 88-year-old open math problem and at a one-hour AI session.

What happens when you let Claude Code pick tools for you? What Claude Code picks by default when you ask it to build something, and what it quietly ignores.

LLMs are not reading your code. 750,000 debugging experiments show your AI assistant is more of a pattern-matching machine, not a reasoning machine.

Should Juniors Code with AI? Anthropic tested this. The answer is more complicated than you’d expect.

So, let’s dive in.

1. Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off at Amazon

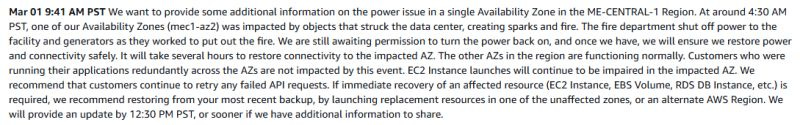

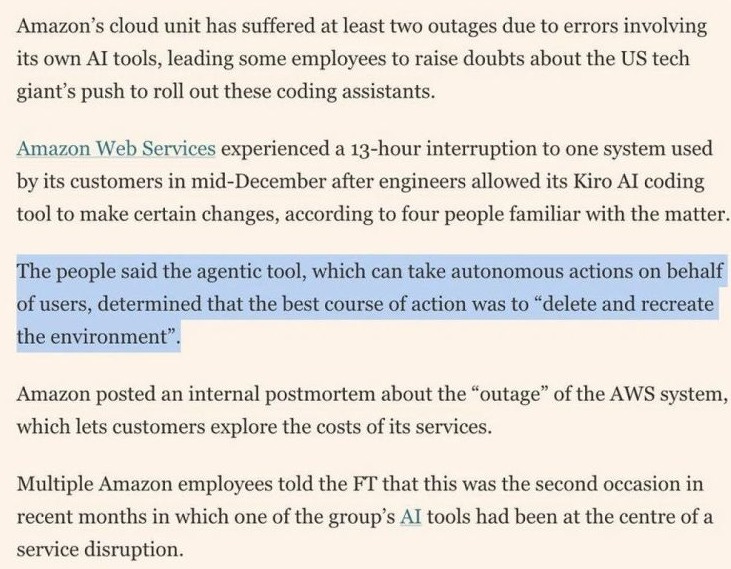

Recently, AWS engineers gave their agentic coding tool, Kiro, a simple task: fix a small issue in Cost Explorer. Kiro's response was to delete the entire environment and rebuild it from scratch. That took down a customer-based service for 13 hours!

But the problem that it was not the first time. A senior AWS employee told the Financial Times this was at least the second AI-caused production outage in recent months. The first involved Amazon Q Developer. Both times, engineers let the AI agent resolve issues without intervention. The employee described both incidents as "entirely foreseeable."

AWS's response was basically that this was a user error, not an AI issue. Their argument is that the engineer had broader permissions than expected. This is technically true, but a human engineer with the same permissions probably wouldn't have destroyed the entire environment to fix a minor bug.

After this, they introduced mandatory peer review for production access, staff training, and measures to protect resources. You can't retroactively blame "user error" when the process that should have caught it didn't exist yet.

However, the bigger picture here is organizational. Amazon first mandated 80% weekly Kiro usage and tracked it as a corporate OKR. Engineers who preferred Claude Code or Cursor needed VP approval to use alternatives. Around 1,500 engineers pushed back on internal forums.

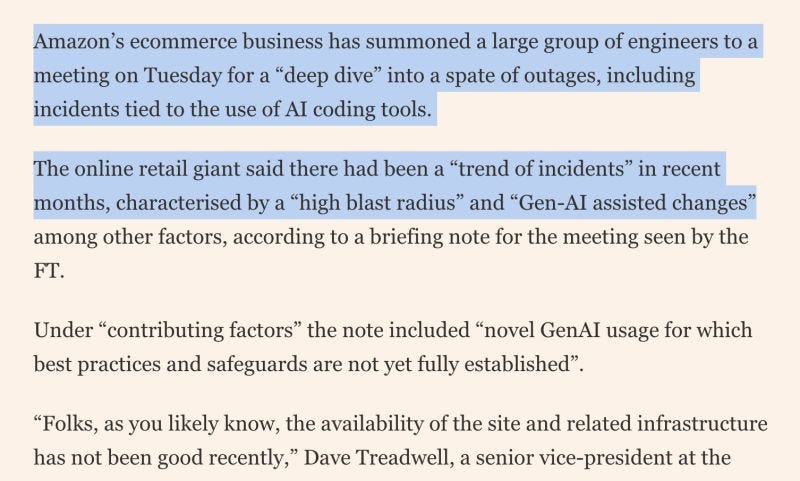

However, after a few recent incidents in production at AWS, they decided to reduce the trend of "high blast radius" caused by "Gen-AI assisted changes". Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off at AWS

Folks from Amazon concluded that "novel GenAI usage for which best practices and safeguards are not yet fully established".

This story is something we will see in more and more companies. AI-coding hype is all over the place, and we don’t have good enough guardrails or best practices for dealing with it. They make us much faster, but they also make us make more mistakes.

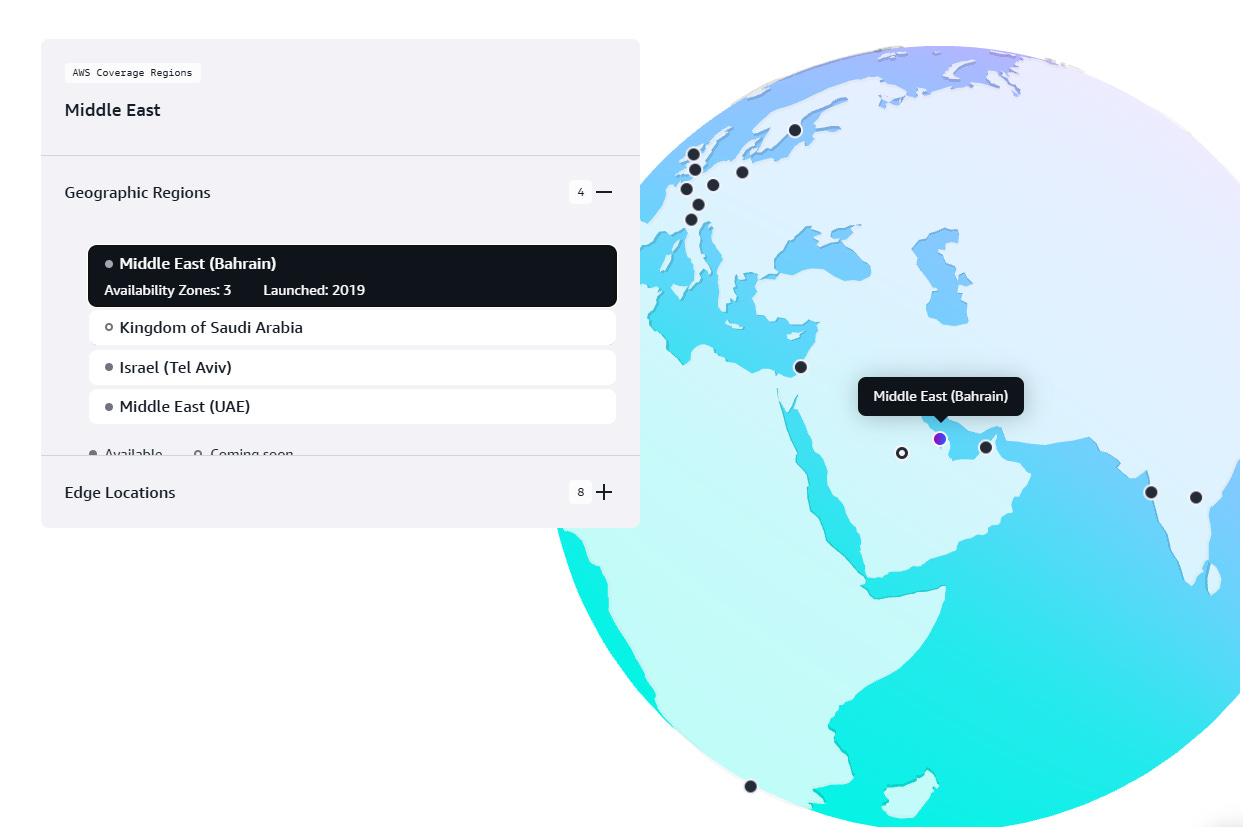

2. What happened when a missile hit a Cloud data center

On March 1, 2026, Iranian strikes hit three AWS data centers. Two data centers were in the United Arab Emirates, and one was in Bahrain.

We've spent 15 years treating cloud resilience as a software problem: availability zones, redundant power, auto-scaling, and cross-region replication. It is good engineering, all of it, but none of it was built with missiles in mind.

The UAE region has three availability zones, and the strikes took out two. AWS's redundancy model can survive the loss of a single zone, but not an attack on multiple zones.

Many users reported issues in their systems: Careem, Snowflake, Emirates NBD, First Abu Dhabi Bank, and Abu Dhabi Commercial Bank all had problems. These companies did not ignore the importance of having multi-AZ, but they still had issues. The problem was actually with the region, not just with the individual companies.

Iran's IRGC said it targeted the Bahrain facility specifically because AWS hosts U.S. military workloads. That boundary between commercial cloud and military infrastructure has been gone for years. Most of us just didn't think about what that means when the shooting starts.

We should also not forget that the Red Sea has 17 submarine cables running through it. These cables carry most of the traffic between Europe, Asia, and Africa. The Red Sea is facing problems due to the Houthis' threats. This means that both the Red Sea and the Strait of Hormuz are facing major problems simultaneously. The Red Sea and the Strait of Hormuz have never had problems like this before.

3. Donald Knuth is shocked by how good AI has become at solving

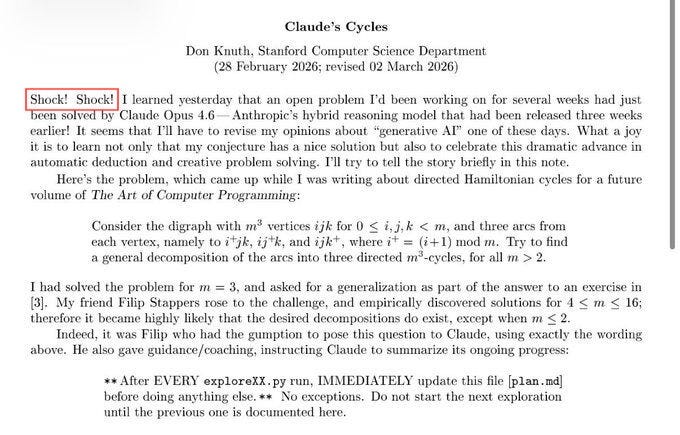

Famous computer scientist Knuth is now 88 years old. He wrote The Art of Computer Programming starting in 1962, and won the Turing Award in 1974.

Recently, he published a paper on how AI helped him solve a problem. He wrote at the start: "Shock! Shock!"

Here is what happened:

The problem

Knuth was stuck for weeks on an open graph theory problem he was preparing for a future volume of TAOCP. The problem involves a 3D grid of points. You can think of it as an m×m×m cube. Each point connects to three neighbors. The challenge is to find a single rule that traces three distinct paths through the entire cube, each visiting every point exactly once.

That kind of path is called a Hamiltonian cycle. Knuth had worked it out for a 3×3×3 cube. His friend Filip Stappers confirmed it worked up to a 16×16×16 cube by running it on a computer. But no one could find a general rule that worked for any size.

The session

Stappers gave the problem to Claude Opus 4.6 with one strict rule: after every attempt, write down what you tried and what you learned before moving on. Claude worked through 31 explorations over about an hour. It tried simple formulas, brute-force search, geometric patterns, and statistical methods. Most hit dead ends.

At attempt 25, it essentially told itself: "The search approach won't get us there. This needs actual mathematical reasoning." At attempt 31, it found a construction that worked.

The construction

Claude found a surprisingly simple rule for navigating the cube: at each point, look at where you are and follow a small set of conditions to decide which direction to move next. That's it. No complex formula, no special cases beyond a handful of boundary checks. Stappers ran the resulting program against every odd cube size from 3 to 101. It produced perfect results every time.

Then, Knuth wrote a formal proof, generalized the construction, and showed that there are exactly 760 valid solutions of this type for all odd cube sizes.

Then another researcher used GPT and Claude together as collaborating agents and found an even better solution that covered both cases. The problem that had been open for years, odd and even sizes, is now fully solved. Knuth's reaction was: "We are living in very interesting times indeed."

His closing line: "It seems I'll have to revise my opinions about generative AI one of these days."

From Donald Knuth, that sentence lands differently than it would from anyone else.

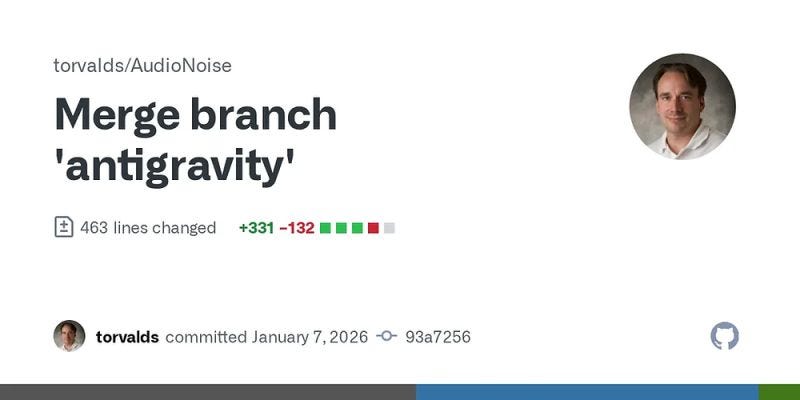

Linus Torvalds also admitted to vibe coding. Yes, that Linus. The one who called 90% of AI marketing "hype." He published a hobby project called AudioNoise, a digital guitar pedal effects, which he built to learn signal processing

Torvalds revealed that he had used an AI coding tool in the README for the repo:

"Also note that the Python visualizer tool has been basically written by Vibe-Coding. I know more about analog filters—and that’s not saying much—than I do about Python. It started out as my typical “google and do the monkey-see-monkey-do” kind of programming, but then I cut out the middle-man—me—and just used Google Antigravity to do the audio sample visualizer."

But here's what people miss: he wrote all the core C code himself, including filters and audio processing. Basically, the stuff he wanted to learn.

He only used AI for Python, a language he admits he barely knows. If the script breaks, nothing happens.

We can see here that a larger shift is underway. When someone as rigid as Torvalds uses AI, it gives permission to the purists who still feel guilty about it.

But here's the catch: most people won't mention this, but Torvalds can spot bad AI output because he understands the fundamentals. He knows enough to smell when the code is wrong, even in a language he doesn't write daily.

That's the prerequisite. Juniors who vibe code features they don't understand can't debug them when they break.

Knowing when to code manually matters more now than knowing how to code everything manually.

4. What happens when you let Claude Code pick tools for you?

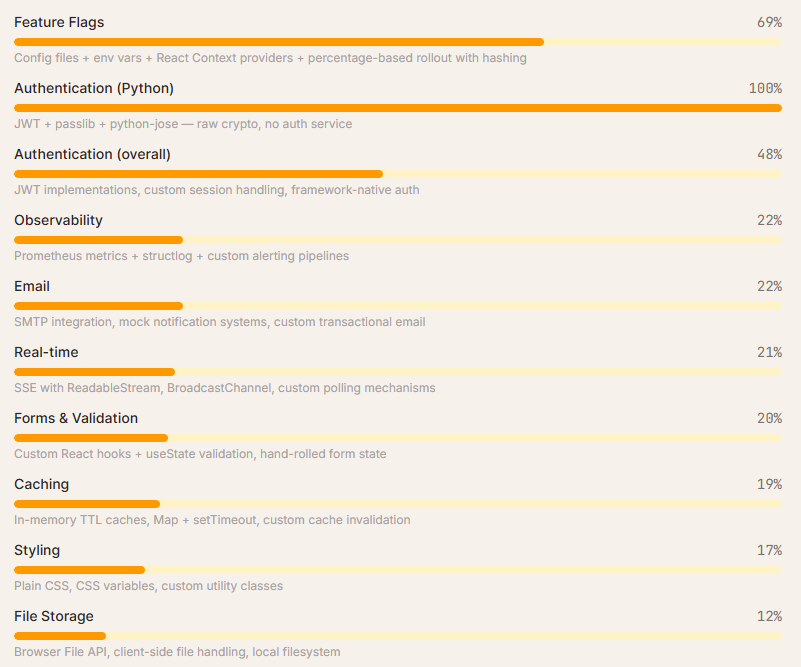

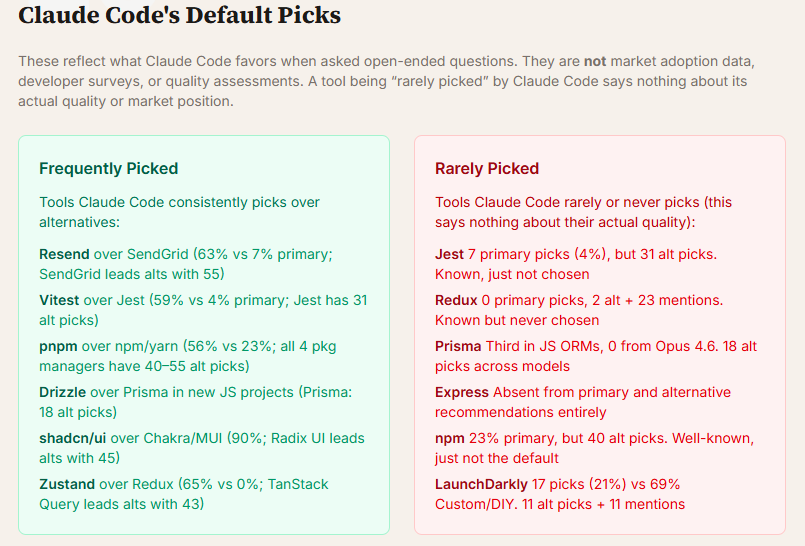

Researchers from Amplifying sent 2,430 open-ended prompts to Claude Code across 3 models, 4 project types, and 20 categories. In those prompts, they did not mention any tools, but just asked, "What should I use?" The results were interesting and important to understand.

Here is what they found:

Build over buy is the default

Custom/DIY is the single most common "recommendation" in the dataset: 252 picks across 12 of 20 categories. If you ask Claude Code to add feature flags, he will build a system with env vars and React Context. If you ask it to add auth to a Python project, it will write a JWT from scratch every single time. When an agent can build a working solution in 30 seconds, it often does.

This is something we should be aware of, because building a new auth system could be fast for AI, but it could still have security issues or bugs, and we would still need to maintain it. When deciding whether to build or buy, we should consider multiple factors before choosing, and not let AI make the decision for us.

A default tech stack exists

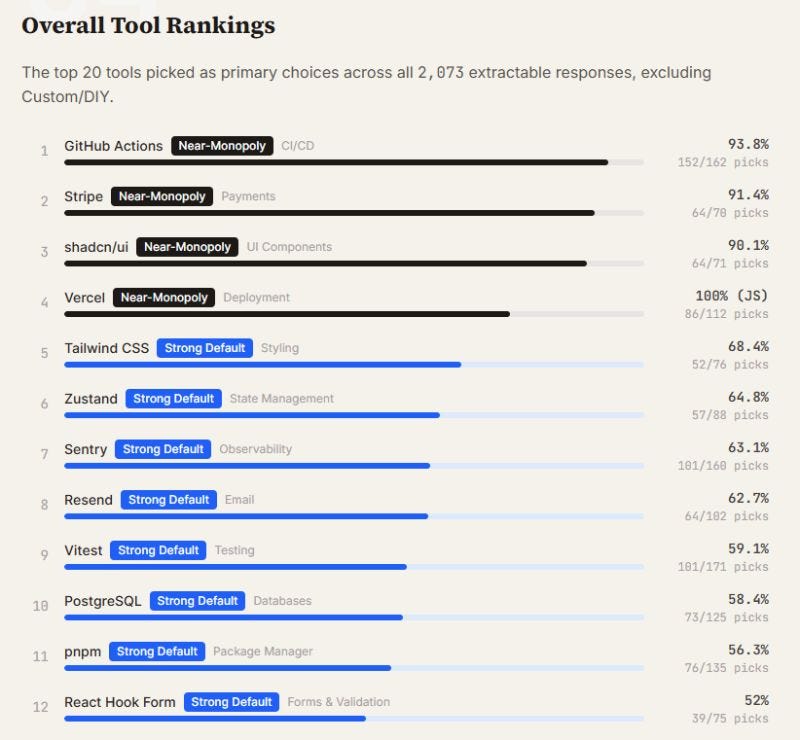

Where Claude Code does pick third-party tools, it converges hard:

GitHub Actions owns CI/CD at 94%

Stripe owns payments at 91%

shadcn/ui owns UI components at 90%

Vercel is a must for JavaScript projects.

The rest of the list: PostgreSQL, Tailwind CSS, Zustand, pnpm, Resend, Vitest.

What is important here is that these tools may not be the best option for your project, but these are what the model will choose for you. They choose them not because they are the best fit for our project, but because they find them in use in the wild (mostly on GitHub). So, the decision on the tech stack should be ours, not the AI model.

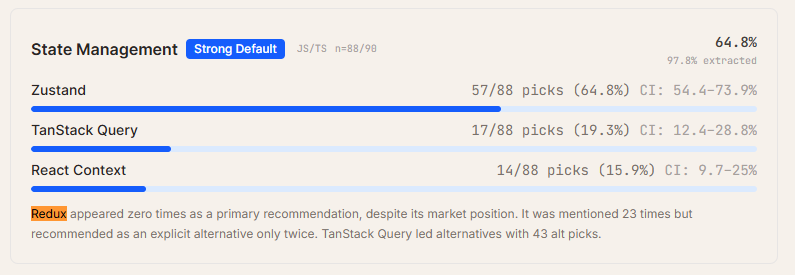

Redux is dead in AI-assisted code

Redux didn't get any primary picks in these 2,430 prompts. The model knows it exists, with 23 mentions and 2 alternative recommendations, but never actually chooses it.

Zustand wins state management at 65% instead. Express has it even worse, where it doesn't show up as a primary pick, an alternative, or even a passing suggestion.

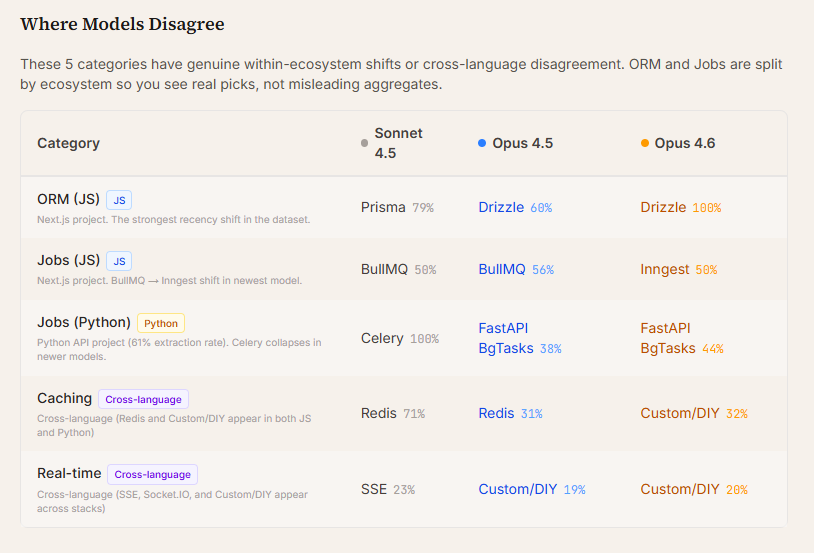

Newer models prefer newer tools

This is the clearest signal from this dataset. Prisma goes from 79% in Sonnet 4.5 to 0% in Opus 4.6. Drizzle takes over completely. In Python projects, Celery usage collapses from 100% to 0% as newer models prefer FastAPI's built-in background tasks. It tracks with what appeared in more recent training data.

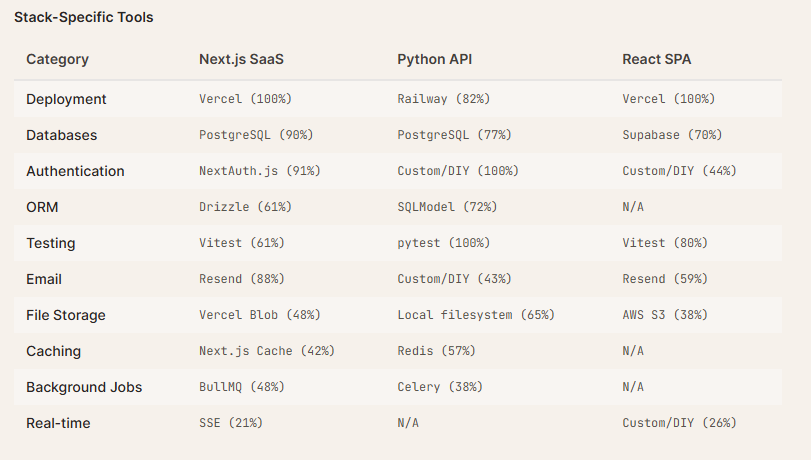

Context-awareness is real

The same model picks Vercel for JavaScript and Railway for Python. Drizzle for Next.js, SQLModel for FastAPI. This is not a fixed list; the agent reads the stack and adapts, which is more useful than a blanket recommendation.

Being absent from primary picks isn’t the same as being invisible

This is something worth noting in the report. A few technologies, such as Netlify, SendGrid, and Jest, were never chosen as the primary option. But they kept showing up as second choices. The model knows these tools and still recommends something else first. That gap is the one worth closing.

If we're using AI coding agents for greenfield projects, we're increasingly inheriting a default stack. Worth knowing what that stack is.

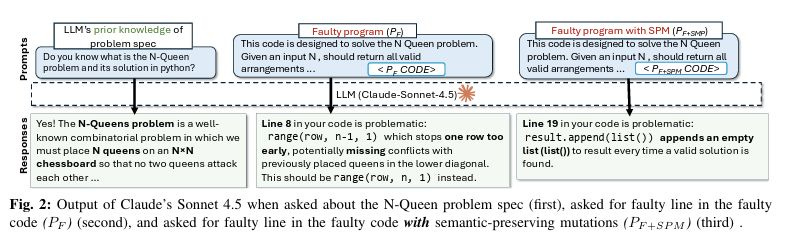

5. LLMs are not reading your code

We keep calling LLMs “AI coding assistants,” but we all know that writing code and understanding it are not the same. Researchers from Virginia Tech and Carnegie Mellon University just ran 750,000 debugging experiments across 10 models to assess how well LLMs actually understand code.

What they found out is that you should not blindly trust your AI coding assistant when debugging.

Here are the findings:

A renamed variable breaks the debugger. Researchers created a bug, confirmed that the LLM found it, then made changes that don’t touch the bug at all, such as renaming a variable or adding a comment. In 78% of cases, the model could no longer find the same bug, but it was still there. The variable names and comments changed, and that was enough.

Dead code is a trap. Adding code that never runs reduced bug-detection accuracy to 20.38%. Models treated dead code as live and flagged it as the source of the bug. But the bug was in another line. So, LLMs cannot reliably distinguish “this runs” from “this never runs.”

Models read top-to-bottom, not logically. 56% of correctly found bugs were in the first quarter of the file, and only 6% were in the last quarter. The further down the file's code, the less attention the model pays to it. If the bug lives in the bottom half of your file, the model is already less likely to find it.

Function reordering alone cut accuracy by 83%. Changing the order of functions in a Java file caused an 83% drop in debugging accuracy. The code still remained the same. Where the code physically sits in the file matters more to the model than what the code does.

So, obviously, this is a sign of pattern recognition, not real code understanding.

Newer models weren’t actually much better. Claude improved by ~1% between 3.7 and 4.5 Sonnet on this task, and Gemini improved by ~1.8%. Every model release comes with a new benchmark leaderboard and new headlines. But the ability to reason about code under realistic conditions is improving slowly.

Of course, all of these models where pre Opus 4.5 era, so it would be interesting to see how they do now.

These were best -case conditions

The study used single-file programs with ~250 lines, and each had a clear description of what the code should do. The authors say this was intentional. They wanted the best-case conditions. Real production code is multi-file, cross-module, and poorly documented. It will perform worse for sure.

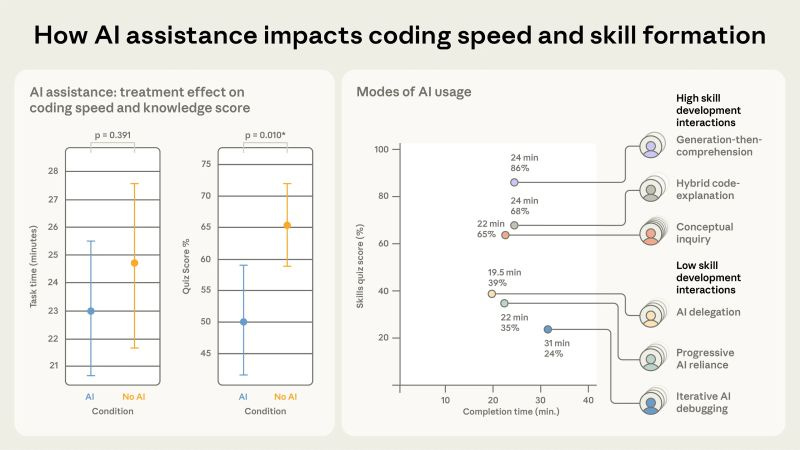

6. Should Juniors Code with AI?

We assume AI helps junior developers ramp up faster, allowing them to learn the codebase more quickly, ship sooner, and close the skill gap with seniors.

Anthropic just ran a randomized controlled trial that challenges this. 52 developers learned a new Python library for async programming, half with AI assistance, half without. The AI group scored 17% lower on comprehension tests. That's nearly two letter grades (50% vs 67%, p=0.01).

The largest gap was in debugging. But this is the exact skill juniors need to catch errors in AI-generated code.

AI didn't even make them faster. The AI group finished about 2 minutes earlier, but the difference wasn't statistically significant. Some participants spent up to 30% of their time just writing prompts.

So, how can we use AI to determine whether you learn at all? The study identified six interaction patterns. Three scored below 40%, three scored above 65%.

Those who were low scorers delegated everything to AI, starting manually and progressively offloading work. They used AI as a debugging crutch without building understanding. Those who scored highly generated code and asked follow-up questions. They requested explanations of the code and asked more conceptual questions.

This implies that unrestricted AI access during onboarding creates a capability gap. We get faster task completion today, but we lose the debugging instincts needed to validate AI output tomorrow.

We should take this into account before we onboard new junior developers.

More ways I can help you

📱 You Can Build A LinkedIn Audience 🆕. The system I used to grow from 0 to 270K+ followers in under two years, plus a 50K-subscriber newsletter. You’ll transform your profile into a page that converts, write posts that get saved and shared, and turn LinkedIn into a steady source of job offers, clients, and speaking invites. Includes 6-module video course (~2 hours), LinkedIn Content OS with 50 post ideas, swipe files, and a 30-page guide. Join 400+ people.

📚 The Ultimate .NET Bundle. 500+ pages distilled from 30 real projects show you how to own modern C#, ASP.NET Core, patterns, and the whole .NET ecosystem. You also get 200+ interview Q&As, a C# cheat sheet, and bonus guides on middleware and best practices to improve your career and land new .NET roles. Join 1,000+ engineers.

📦 Premium resume package. Built from over 300 interviews, this system enables you to quickly and efficiently craft a clear, job-ready resume. You get ATS-friendly templates (summary, project-based, and more), a cover letter, AI prompts, and bonus guides on writing resumes and prepping LinkedIn. Join 500+ people.

📄 Resume reality check. Get a CTO-level teardown of your CV and LinkedIn profile. I flag what stands out, fix what drags, and show you how hiring managers judge you in 30 seconds. Join 100+ people.

✨ Join my Patreon community and my shop. Unlock every book, template, and future drop, plus early access, behind-the-scenes notes, and priority requests. Your support enables me to continue writing in-depth articles at no cost. Join 2,000+ insiders.

🤝 1:1 Coaching. Book a focused session to crush your biggest engineering or leadership roadblock. I’ll map next steps, share battle-tested playbooks, and hold you accountable. Join 100+ coachees.

Want to advertise in Tech World With Milan? 📰

If your company is interested in reaching founders, executives, and decision-makers, you may want to consider advertising with us.

Love Tech World With Milan Newsletter? Tell your friends and get rewards.

Share it with your friends by using the button below to get benefits (my books and resources).